In November 2025, Sam Altman appeared on a podcast alongside OpenAI investor Brad Gerstner. When Gerstner challenged him on how a company with $13 billion in revenue could justify $1.4 trillion in spending commitments, it did not go well. Three months later, OpenAI quietly cut that commitment to $600 billion.

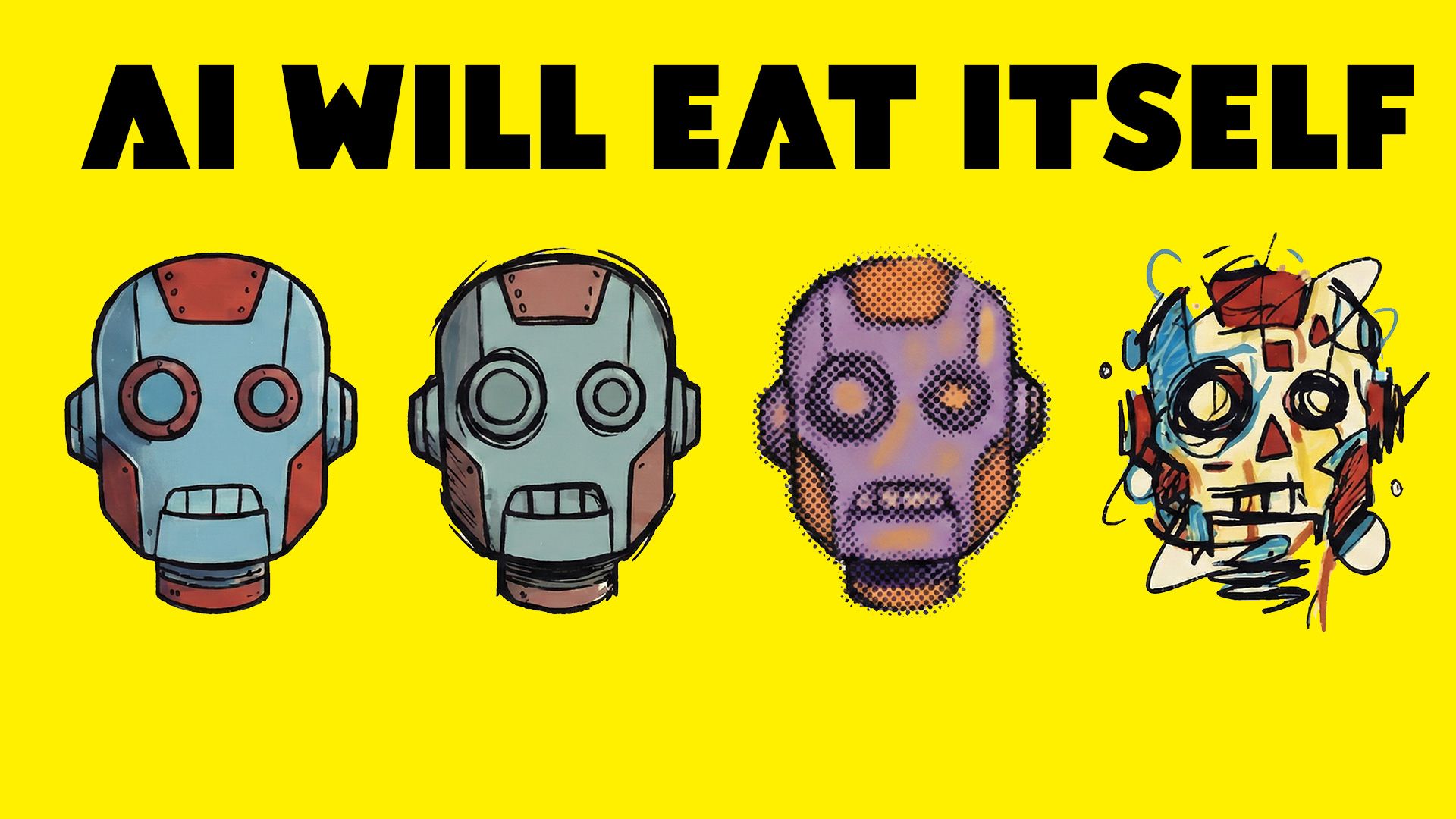

We are living through what may be the largest capital allocation event in human history. Elon Musk says AGI will arrive this year. Dario Amodei describes a 'country of geniuses in a datacenter' within two years. Sam Altman declared in January 2025 that OpenAI was 'confident we know how to build AGI,' before saying seven months later that AGI was 'not a super useful term.'

These are the biggest predictions in the history of technology. And they are being made by people whose companies have yet to demonstrate that their business models work. So can we actually believe anything these people say?

Each major AI narrative has a convenient commercial function:

Claims that AI will replace workers raises the perceived value of AI over human labour.

An insisting there is no bubble protects share pricesimminent AGI justifies enormous capital expenditure.

Warning about China encourages governments to loosen regulation.

Whatever an AI CEO says in a headline, it is almost certainly in their commercial interest.

The commercial incentive

Ken Griffin, founder of hedge fund Citadel, offered a more grounded perspective at a recent World Economic Forum panel. When asked about AI's productivity impact, he noted that most business leaders claiming AI was changing their operations were not actually using generative AI at all. The real story, he argued, was a broader wave of digitisation.

On Amodei's prediction that half of all entry-level white-collar jobs would disappear within five years, Griffin explained that trillion dollars in US data centre spend requires a very large promise to justify it. 'How else are you going to get people to write $500 billion of checks just this year alone? There needs to be a level of like AI is your saviour almost.'?

Ken Griffin at Davos is a more grounded view in a world of hype.

Griffin's firm uses AI extensively in its trading operations, so he has every reason to want the technology to succeed. But he is a buyer of AI, not a seller of it. And buyers tend to be more honest about what they are getting. He also referenced what researchers call 'AI work slop': output that looks impressive on the surface but falls apart under scrutiny.

And the fundraising numbers tell their own story. OpenAI recently closed a $110 billion funding round - the largest private round ever. Anthropic raised $30 billion. These figures are not justified by current performance. They are bets on a future the CEOs themselves are describing.

The track record

Incentives alone don't prove dishonesty. What matters is whether there is a track record of accurate predictions. On that front, the evidence is not encouraging.

Elon Musk promised a fully autonomous Los Angeles to New York Tesla drive by late 2017:

It was eventually completed by an independent owner in January 2026.

He planned for humans on Mars by 2024:

No timeframe is clear.

In 2024, he predicted AGI would arrive by 2025:

When 2025 passed without it, he pushed the timeline to 2026.

At Davos in January 2026, he claimed AGI could arrive by December.

Dario Amodei is in some ways the most interesting case. He avoids the term AGI, calling it a 'marketing term,' but then describes what is effectively the same concept under different branding - AI replacing most software developers within a year and conducting Nobel-level research within two.

But he recognises AGI is a marketing term, and then markets the same idea with different words.

OpenAI also announced it would introduce ads into ChatGPT. For a company supposedly on the verge of creating the most transformative technology in history, the operational reality looks a lot more like a conventional tech company scrambling for revenue.

The sceptics and the money trail

The most striking pattern is this: the biggest voices with the longest timelines tend to work at companies that actually make money.

Yann LeCun, Meta's former Chief AI Scientist, backed by tens of billions in advertising revenue, says we 'can't even build cat-level intelligence yet' and puts the timeline at several years if not a decade. Demis Hassabis at Google DeepMind puts AGI at a 50% chance by 2030.

The companies making the most aggressive predictions - OpenAI, xAI, Anthropic - are the ones burning through capital without established revenue streams. Without the promise of a transformative breakthrough just around the corner, the spending makes no sense.

Academic research adds further context. Economist Daron Acemoglu estimated that AI-driven automation would increase total factor productivity by no more than 0.66% over ten years. An MIT study found that 95% of companies running AI pilot studies reported little or no return on investment. These are not numbers that support the claims being made.

This doesn't mean everything AI leaders say is wrong. Coding tools are genuinely useful. Year-on-year capability improvements are real.

But there is a vast gap between 'AI is an increasingly powerful tool' and 'AGI is two years away.'

The first is supported by evidence.

The second requires you to believe that multiple unsolved problems will all resolve within a timeframe that conveniently aligns with current fundraising needs.

When the people making the boldest predictions have hundreds of billions of dollars riding on you believing them, a little skepticism seems warranted.