The Pentagon is threatening to sever its contract with Anthropic and designate the company a "supply chain risk" after months of failed negotiations over how the US military can use its AI model, Claude. The designation, usually reserved for foreign adversaries like Chinese firms, would force every Pentagon contractor and vendor to certify they do not use Anthropic's technology.

The dispute centres on two red lines Anthropic refuses to cross: mass domestic surveillance and fully autonomous weapons that fire without human involvement. The Pentagon insists on unrestricted access for "all lawful use" of any AI model it deploys. Defence Secretary Pete Hegseth has made clear he will not work with companies that limit military applications of their technology.

What went down

Anthropic's Claude was used during the January operation to capture Nicolás Maduro in Venezuela.

An Anthropic executive reportedly asked Palantir whether Claude was used in the raid, alarming Pentagon officials.

The Pentagon is now reviewing Anthropic's $200m contract and considering a supply chain risk label.

Rival labs OpenAI, Google and xAI have agreed to "all lawful use" terms in unclassified systems.

Elon Musk called Anthropic "insufferably woke and sanctimonious AI," declaring Grok "must win."

Why does this matter?

Claude is currently the only frontier AI model deployed on the Pentagon's classified networks, through a partnership with Palantir. Replacing it would be, in the Pentagon's own words, "massively disruptive." The military praised Claude's capabilities even as it threatened to ban it.

A supply chain risk designation would extend far beyond the $200m defence contract. Eight of the ten largest US companies use Claude. Every Pentagon contractor would need to certify they have removed Anthropic from their workflows, creating ripple effects across the private sector.

Our take

This story exposes a fundamental tension that will define the AI industry for years. Anthropic built its reputation on safety-first principles. Those same principles are now being used against it by an administration that views guardrails as obstacles to military advantage. The other leading labs have already agreed to the Pentagon's terms, at least for unclassified systems, leaving Anthropic isolated as the only company maintaining red lines around autonomous weapons and domestic surveillance.

The broader implication goes well beyond one company's contract. If the Pentagon succeeds in establishing "all lawful use" as the industry standard, it removes AI companies from any oversight role in how their models are deployed in classified operations. Anthropic CEO Dario Amodei has argued that democracies should use AI for defence, but carefully and within limits. The Pentagon's position is that those limits should be set by the military alone. How this resolves will shape the relationship between Silicon Valley and the national security state for the next decade.

Another big thing… We are here.

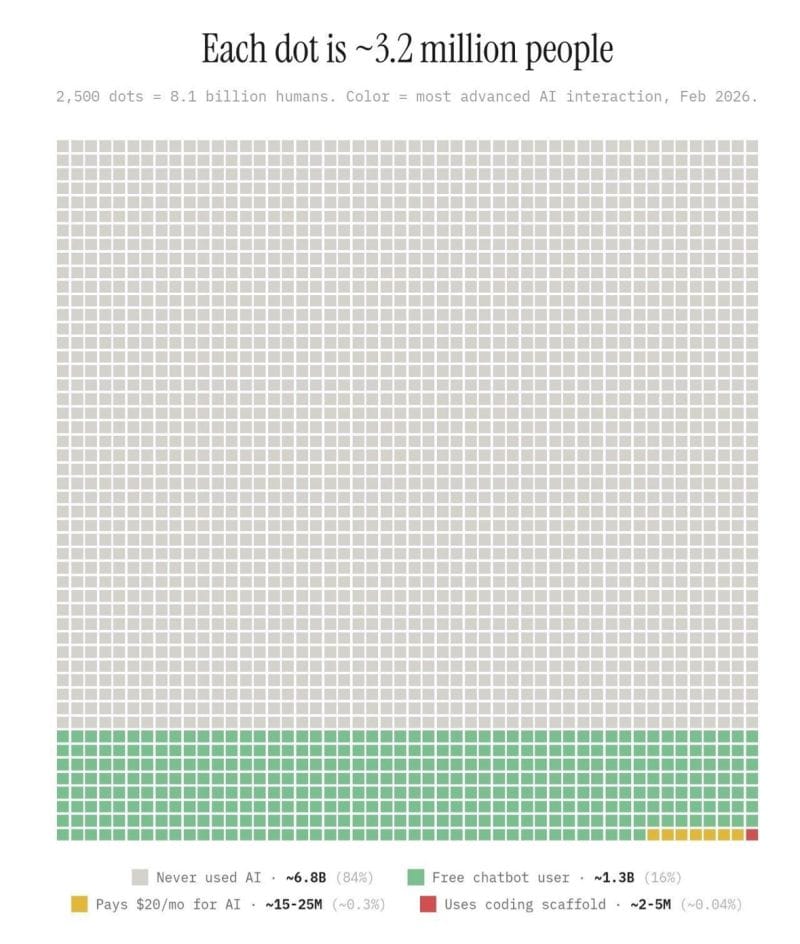

Not strictly news, but this chart is being shared by A LOT of people on social media - with the basic point that when you zone out from the AI hype we really are very early in the adoption. 84% of the world’s population have never used AI according the chart… although exactly where it came from is difficult to know. But it’s certainly plausible.

Go even more Agentic on YouTube

AI safety has suddenly come into focus, and Anthropic are doing their best to stay true to their values and be transparent on model behavior. But as we saw in the last couple of weeks, it might not be enough.