In 1993, the science fiction writer Vernor Vinge stood before a NASA symposium and predicted that within 30 years, technology would create entities with greater-than-human intelligence. When that happened, he said, human history as we understand it would end. He called it the Singularity.

Vinge's window closed in 2023. He died the following March. The Singularity had not arrived in the form he imagined, yet within months of that deadline the leaders of OpenAI, Anthropic and Google DeepMind were predicting human-level AI inside two to five years.

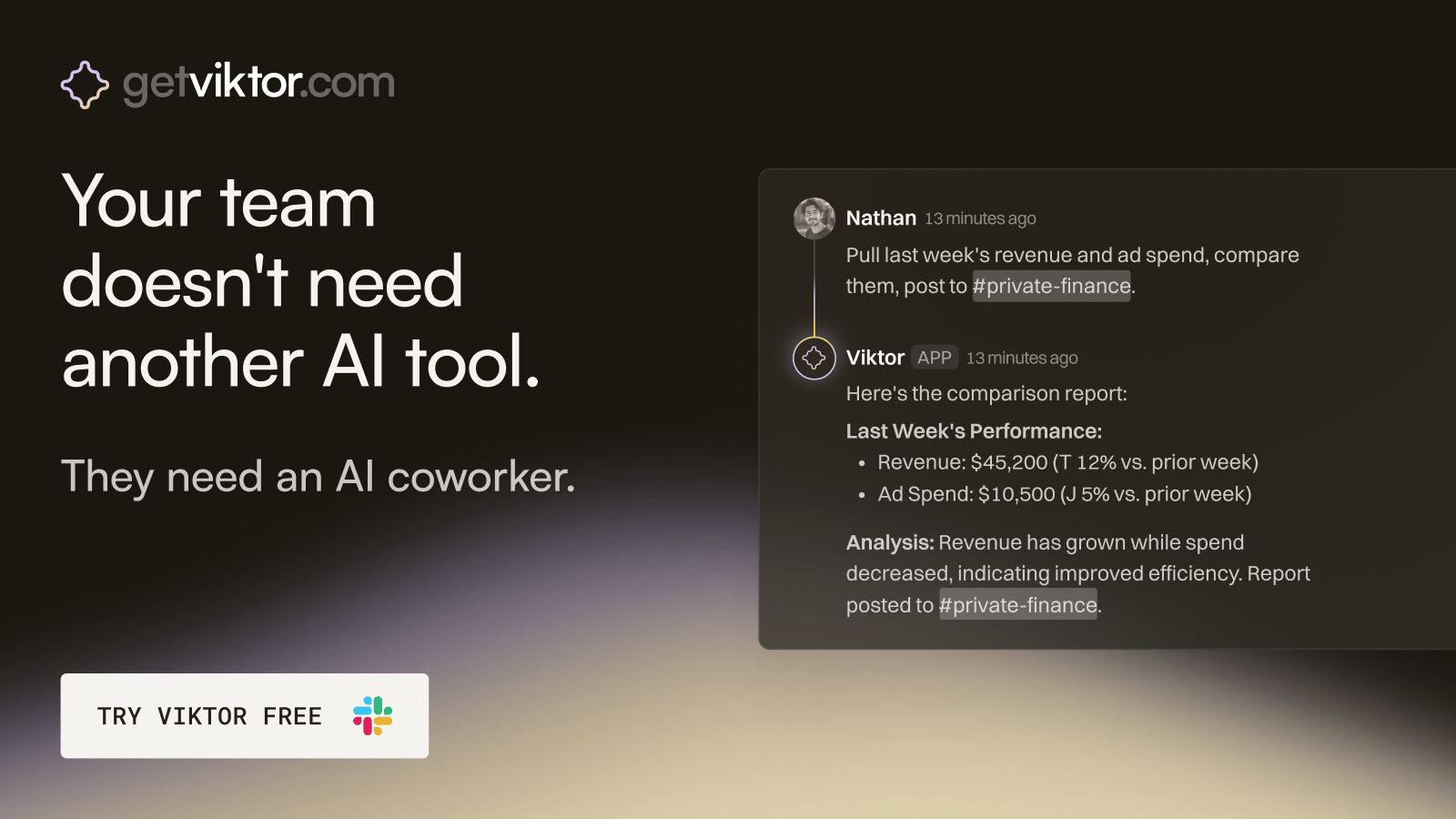

Partner Message

The ops hire that onboards in 30 seconds.

Viktor is an AI coworker that lives in Slack, right where your team already works.

Message Viktor like a teammate: "pull last quarter's revenue by channel," or "build a dashboard for our board meeting."

Viktor connects to your tools, does the work, and delivers the actual report, spreadsheet, or dashboard. Not a summary. The real thing.

There’s no new software to adopt and no one to train.

Most teams start with one task. Within a week, Viktor is handling half of their ops.

The idea has older roots. In the 1950s, the mathematician Stan Ulam recalled a conversation with John von Neumann about the ever-accelerating pace of technology, heading toward a point beyond which human affairs could not continue.

In 1965, the British mathematician I.J. Good formalised the logic. An ultraintelligent machine could design machines better than itself, producing what he called an intelligence explosion. The intelligence of man would be left far behind.

Good added a caveat that often gets dropped from the quotation. This would only work, he wrote, provided the machine remained docile enough to tell us how to keep it under control.

Three ideas that often get tangled

The first is accelerating change. The gap between writing and the printing press was thousands of years. Between the printing press and the telegraph, hundreds. Between the telegraph and the internet, decades. Between the internet and generative AI, less than thirty years.

The second is the intelligence explosion itself. Once a machine is intelligent enough to improve its own design, it produces a better version, which produces a better version, and so on. Each cycle happens faster than the last.

The third is Vinge's Singularity proper. A point beyond which prediction is genuinely impossible, because the entities driving change would be as far beyond us intellectually as we are beyond goldfish. Our existing models simply would not apply.

Vinge identified four routes to superhuman intelligence. Conscious AI was one. Large computer networks waking up was another. Human-computer interfaces becoming so intimate that users qualify as superhuman, and direct biological enhancement of human intellect.

He thought the human-machine route was the most likely near-term path. Watch an AI coding agent work for fifteen minutes, correcting its own errors, and you have some sense of what he was pointing toward.

The logic and the pushback

In 2010, the philosopher David Chalmers published a rigorous analysis in the Journal of Consciousness Studies. He broke the Singularity argument into three premises: equivalence, extension and amplification. Each, he argued, is individually plausible.

The crucial assumption linking them is what Chalmers called the proportionality thesis. Increases in intelligence produce proportional increases in the capacity to design more intelligent systems. If doubling intelligence roughly doubles design capacity, the explosive cycle holds.

This is where the argument gets contested. David Thorstad, a philosopher at Oxford's Global Priorities Institute, published a detailed critique in 2022. He argued the proportionality thesis relies on a single type of evidence: historical leaps where low-hanging fruit was available.

The leap from no-technology to some-technology is enormous. The leap from very-good-technology to slightly-better-technology may not follow the same curve. Thorstad's case is that good ideas become harder to find as the easy ones get used up.

Moore's Law itself is a case study. Between 1971 and 2014, the doubling of transistor density was sustained only through an eighteen-fold increase in semiconductor research effort. The apparent constancy of progress masked an accelerating cost.

What happens if it does arrive

Science fiction writers felt the problem first. Vinge noted that authors who tried to write stories set after the Singularity hit an opaque wall. You cannot write convincing characters who are thousands of times smarter than you.

Chalmers outlined four options for humanity. Extinction. Isolation, in which we persist but become irrelevant. Inferiority, in which we coexist with superintelligence in a position analogous to animals in a human world. Or integration, in which we merge with the technology.

Thorstad counters with four empirical objections to the growth assumptions involved. Good ideas become harder to find. Bottlenecks constrain systems. Physical limits are real, with transistors already roughly ten times the diameter of an atom.

His fourth point draws on research by Neil Thompson and colleagues. Across chess, Go, protein folding and weather prediction, exponential growth in computing power has produced only linear improvements in performance. You put exponentially more in and get a little more out.

Whether you find the Singularity compelling may depend on which evidence you weight most heavily. The honest answer is that we do not know. One possibility is neither arrival nor failure to arrive, but that we will not recognise it if it does happen.